Also see: Top 15 Data Warehouse Tools

Data quality tools play a critical role in today’s data centers. Given the complexity of the Cloud era, there’s a growing need for data quality software to help with data analytics and data mining. The best data quality software efficiently analyzes and preps data from numerous sources, including databases, e-mail, social media, logs, and the Internet of Things (IoT).

Data quality software typically address four basic areas: data cleansing, data integration, master data management, and metadata management. They typically identify errors and anomalies through the use of algorithms and lookup tables. Over the years, these tools have become far more sophisticated and automated—but also easier to use. They now tackle numerous tasks, including validating contact information and mailing addresses, data mapping, data consolidation associated with extract, transform and load (ETL) tools, data validation reconciliation, sample testing, data analytics and all forms of Big Data handling.

Identifying the right data quality management solution is important — and it hinges on many factors, including how and where an organization stores and uses data, how data flows across networks, and what type of data a team is attempting to tackle. Although basic data quality tools are available for free through open source frameworks, many of today’s solutions offer sophisticated capabilities that work with numerous applications and database formats. Of course, it’s important to understand what a particular solution can do for your enterprise — and whether you may need multiple tools to address more complex scenarios.

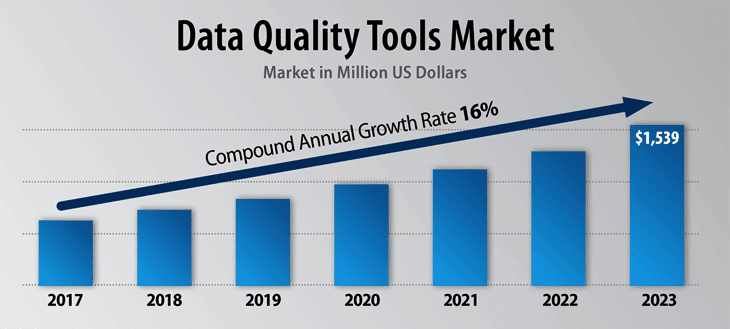

The market for data quality tools and software is expected to enjoy exceptionally rapid growth in the years ahead.

Identify your data challenges.

Incorrect data, duplicate data, missing data and other data integrity issues can significantly impact — and undermine — the success of a business initiative. A haphazard or scattershot approach to maintaining data integrity may result in wasted time and resources. It can also lead to subpar performance and frustrated employees and customers. It’s important to start by conducting an analysis of existing data sources, current tools in use and problems and issues that occur. This delivers insight into gaps and possible fixes.

Understand what data quality tools can and cannot do.

There’s no fix for completely broken, incomplete or missing data. Data cleansing tools cannot perform magic on dated legacy system or sloppy spreadsheets. If your organization identifies gaps and shortcomings in its data collection and management methods, it may be necessary to go back to the drawing board and examine the entire data framework. This includes the data management tools you’re currently using, how your organization manages and stores data, and what workflows and processes could be changed and improved.

Understand the strengths and weaknesses of various data cleansing tools.

It’s obvious that not all data quality management tools are created equal. Some are designed for specific applications such as Salesforce or SAP, others excel at spotting errors in physical mailing addresses or e-mail, still others tackle IoT data or pull together disparate data types and formats. In addition, it’s important to understand how a data cleansing tool works and its level of automation, as well as specific features that may be required to accomplish specific tasks. Finally, it’s crucial to consider factors such as data controls/security and licensing costs.

Key insight: Cloudingo is a prominent data integrity and data cleansing tool designed for Salesforce.

Cloudingo tackles everything from deduplication and data migration to spotting human errors and data inconsistencies. The platform handles data imports, delivers a high level of flexibility and control, and includes strong security protections. The application uses a drag-and-drop graphical interface to eliminate coding and spreadsheets. It includes templates with filters that allow for customization, and it offers built in analytics. APIs support both REST and SOAP. This makes it possible to run the application from the cloud or from internal systems.

The data cleansing management tool handles all major requirements, including merging duplicate records and converting leads to contacts; deduplicating import files; deleting stale records; automating tasks on a schedule; and providing detailed reporting functions about change tracking. It offers near real-time synchronization of data. The application includes strong security controls that include permission-based logins and simultaneous logins. Cloudingo supports unique and separate user accounts and tools for auditing who has made changes.

Key insight: The vendor has established itself as a leader in data cleansing through a comprehensive set of tools that clean, match, dedupe, standardize and prepare data.

Data Ladder is designed to integrate, link and prepare data from nearly any sources. It uses a visual interface and taps a variety of algorithms to identify phonetic, fuzzy, abbreviated, and domain-specific issues. The company’s DataMatch Enterprise solution aims to deliver an accuracy rate of 96 percent for between 40K and 8M record samples, based on an independent analysis. It uses multi-threaded, in-memory processing to boost speed and accuracy, and it supports semantic matching for unstructured data.

Data Ladder supports integrations with a vast array of databases, file formats, big data lakes, enterprise applications and social media. It provides templates and connectors for managing, combining and cleansing data sources. This includes Microsoft Dynamics, Sage, Excel, Google Apps, Office 365, SAP, Azure Cosmos database, Amazon Athena, Salesforce and dozens of others. The data standardization features draw on more than 300,000 pre-built rules, while allowing customizations. The system uses proprietary built-in pattern recognition, but it also lets organizations build their own RegEx-based patterns visually.

Key insight: IBM’s data quality application, available on-premise or in the cloud, offers a broad yet comprehensive approach to data cleansing and data management.

The focus is on establishing consistent and accurate views of customers, vendors, locations and products. InfoSphere QualityStage is designed for big data, business intelligence, data warehousing, application migration and master data management. IBM offers a number of key features designed to produce high quality data. A deep data profiling tool delivers analysis to aid in understanding content, quality and structure of tables, files and other formats. Machine learning can auto-tag data and identify potential issues.

The platform offers more than 200 built-in data quality rules that control the ingestion of bad data. The tool can route problems to the right person so that the underlying data problem can be addressed. A data classification feature identifies personally identifiable information (PII) that includes taxpayer IDs, credit cards, phone numbers and other data. This helps eliminate duplicate records or orphan data that can wind up in the wrong hands. The platform supports strong governance and rule-based data handling. It includes strong security features.

Key insight: Informatica has adopted a framework that handles a wide array of tasks associated with data quality and Master Data Management (MDM).

Informatica’s offering includes role-based capabilities; exception management; artificial intelligence insights into issues; pre-built rules and accelerators; and a comprehensive set of data quality transformation tools. Informatica’s Data Quality solution is adept at handling data standardization, validation, enrichment, deduplication, and consolidation. The vendor offers versions designed for cloud data residing in Microsoft Azure and AWS.

The vendor also offers a Master Data Management (MDM) application that addresses data integrity through matching and modeling; metadata and governance; and cleansing and enriching. Among other things, Informatica MDM automates data profiling, discovery, cleansing, standardizing, enriching, matching, and merging within a single central repository. The MDM platform supports nearly all types of structured and unstructured data, including applications, legacy systems, product data, third party data, online data, interaction data and IoT data.

Key insight: OpenRefine, formerly known as Google Refine, is a free open source tool for managing, manipulating and cleansing data, including big data.

OpenRefine can accommodate up to a few hundred thousand rows of data. It cleans, reformats and transforms diverse and disparate data. OpenRefine is available in several languages, including English, Chinese, Spanish, French, Italian, Japanese and German. GoogleRefine cleans and transforms data from a wide variety of sources, including standard applications, the web, and social media data.

The application provides powerful editing tools to remove formatting, filter data, rename data, add elements and accomplish numerous other tasks. In addition, the application can interactively change in bulk large chunks of data to fit different requirements. The ability to reconcile and match diverse data sets makes it possible to obtain, adapt, cleanse and format data for webservices, websites and numerous database formats. In addition, GoogleRefine accommodates numerous extensions and plugins that works with many data sources and data formats.

Key insight: An established legacy vendor now makes highly effective use of the cloud.

Having now completely altered its earlier stance and now embraced the cloud fully, much of Oracle’s market presence in the data quality sector comes from cloud-based versions of its Enterprise Data Quality application. To cater to its audience of large enterprise customers, the Data Quality application can be hybrid as well.

To be sure, Oracle is a strong player in the data market, with its dominant position in the database sector. While the company isn’t quite that dominant in the data quality market, it’s solution is well regarded for many of the standard functions of data quality, including merging, cleansing and – perhaps most important – standardization. Perhaps due to this, Oracle’s Data Quality tool has seen some respectable growth over the last year. Leveraging its database strength, Data Quality can be used by itself, or as part of Oracle’s Autonomous Database application.

Key insight: Even among top data quality solutions vendors, SAP is a leader in the market.

SAP’s portfolio, which includes SAP Information Steward, SAP Data Intelligence, and SAP Data Services, is well regarded by data professionals in large enterprise settings. The company has over 20,000 customers for its data quality applications – an impressive number in this niche sector.

Remarkably, SAP – an entrenched legacy vendor – has enjoyed double digit client growth over the last year. Perhaps most impressively, the company’s SAP Data Intelligence offering is a major step past SAP’s Data Hub solution; it’s a greatly refreshed, newer version. Data Intelligence offers machine learning and artificial intelligence, a critical element in today’s data handling.

Indeed, Data Intelligence is now, in essence, the company’s flagship data quality solution. In a major nod to existing clients, Data Intelligence offers seamless interoperability with other SAP toolsets. In addition to data cataloguing and data governance, it works well with applications that handle business process, data prep and data integration. The company is well established, but they are working well at offering a very current product line.

Key insight: SAS Data Management is a role-based graphical environment designed to manage data integration and cleansing.

The SAS solution includes powerful tools for data governance and metadata management, ETL and ELT, migration and synchronization capabilities, a data loader for Hadoop and a metadata bridge for handling big data.

SAS Data Management offers a powerful set of wizards that aid in the entire spectrum of data quality management. These include tools for data integration, process design, metadata management, data quality controls, ETL and ELT, data governance, migration and synchronization and more. Strong metadata management capabilities aid in maintaining accurate data. The application offers mapping, data lineage tools that validate information, and wizard-driven metadata import and export and column standardization capabilities that aid in data integrity. Data cleansing takes place in native languages with specific language awareness and location awareness for 38 regions worldwide. The application supports reusable data quality business rules, and it embeds data quality into batch, near-time and real-time processes.

Key insight: Syncsort’s purchase of Trillium has positioned the company as a leader in the data integrity space.

It offers five versions of the plug-and-play application: Trillium Quality for Dynamics, Trillium Quality for Big Data, Trillium DQ, Trillium Global Locator and Trillium Cloud. All address different tasks within the overall objective of optimizing and integrating accurate data into enterprise systems.

Trillium Quality for Big Data cleanses and optimizes data lakes. It uses machine learning and advanced analytics to spot dirty and incomplete data, while delivering actionable business insights across disparate data sources.Trillium DQ works across applications to identify and fix data problems. The application, which can be deployed on-premises or in the cloud, supports more than 230 countries, regions and territories. It integrates with numerous architectures, including Hadoop, Spark, SAP and Microsoft Dynamics.

Trillium DQ can find missing, duplicate and inaccurate records but also uncover relationships within households, businesses and accounts. It includes an ability to add missing postal information as well as latitude and longitude data, and other key types of reference data. Trillium Cloud focuses on data quality for public, private and hybrid cloud platforms and applications. This includes cleansing, matching, and unifying data across multiple data sources and data domains.

Key insight: Talend focuses on producing and maintaining clean and reliable data through a sophisticated framework that includes machine learning, pre-built connectors and components, data governance and management and monitoring tools.

The Talend platform addresses data deduplication, validation and standardization. It supports both on-premises and cloud-based applications while protecting PII and other sensitive data. The data integrity application uses a graphical interface and drill down capabilities to display details about data integrity. It allows users to evaluate data quality against custom-designed thresholds and measure performance against internal or external metrics and standards.

The application enforces automatic data quality error resolution through enrichment, harmonization, fuzzy matching, and de-duplication. Talend offers four versions of its data quality software. These include two open-source versions with basic tools and features and a more advanced subscription-based model that includes robust data mapping, re-usable “joblets,” wizards and interactive data viewers. More advanced cleansing and semantic discovery tools are available only with the company’s paid Data Management Platform.

Key insight: TIBCO Clarity places a heavy emphasis on analyzing and cleansing large volumes of data to produce rich and accurate data sets.

The application is available in on-premises and cloud versions. It includes tools for profiling, validating, standardizing, transforming, deduplicating, cleansing and visualizing for all major data sources and file types. Clarity offers a powerful deduplication engine that supports pattern-based searches to find duplicate records and data. The search engine is highly customizable; it allows users to deploy match strategies based on a wide array of criteria, including columns, thesaurus tables and other criteria—including across multiple languages. It also lets users run deduplication against a dataset or an external master table.

A faceting function allows users to analyze and regroup data according to numerous criteria, including by star, flag, empty rows, text patterns and other criteria. This simplifies data cleanup while providing a high level of flexibility. The application supports strong editing functions that let users manage columns, cells and tables. It supports splitting and managing cells, blanking and filling cells and clustering cells. The address cleansing function works with TIBCO GeoAnalytics as well as Google Maps and ArcGIS.

Key insight: Validity, the maker of DemandTools, delivers a robust collection of tools designed to manage CRM data within Salesforce.

The product accommodates large data sets and identifies and deduplicates data within any database table. It can perform multi-table mass manipulations and standardize Salesforce objects and data. The application is flexible and highly customizable, and it includes powerful automation tools. The vendor focuses on providing a comprehensive suite of data integrity tools for Salesforce administrators. DemandTools compares a variety of internal and external data sources to deduplicate, merge and maintain data accuracy.

DemandTools offers many powerful features, including the ability to reassign ownership of data. In addition, a Find/Report module allows users to pull external data, such as an Excel spreadsheet or Access database, into the application and compare it to any data residing inside a Salesforce object. Validity JobBuilder tool automates data cleansing and maintenance tasks by merging duplicates, backing up data, and handling updates according to preset rules and conditions.

|

Vendor |

Tools |

Focus |

Key Features |

|

Cloudingo |

Cloudingo |

Salesforce data |

Deduplication; data migration management; spots human and other errors/inconsistencies |

|

Data Ladder |

DataMatch Enterprise; ProductMatch |

Diverse data sets across numerous applications and formats |

Includes more than 300,000 prebuilt rules; templates and connectors for most major applications |

|

IBM |

InfoSphere QualityStage |

Big data, business intelligence; data warehousing; application migration and master data management |

Includes more than 200 built-in data quality rules; strong machine learning and governance tools |

|

Informatica |

Data Quality Master Data Management |

Accommodates diverse data sets; supports Azure and AWS |

Data standardization, validation, enrichment, deduplication, and consolidation |

|

OpenRefine |

OpenRefine |

Transforms, cleanses and formats data for analytics and other purposes |

Powerful capture and editing functions. |

|

Oracle |

Data Intelligence |

Standardizes, data quality, data prep |

Leverages Oracle’s investment in the cloud |

|

SAP |

Enterprise Data Quality

|

Includes machine learning and AI |

Well regarded by a large user base |

|

SAS |

Data Management |

Managing data integration and cleansing for diverse data sources and sets |

Strong metadata management; supports 38 languages |

|

Syncsort |

Trillium Quality for Dynamics; Trillium Quality for Big Data; Trillium Quality for DQ; Trillium Global Locator; Trillium Cloud |

Cleansing, optimizing and integrating data from numerous sources |

DQ supports more than 230 countries, regions and territories; works with major architectures, including Hadoop, Spark, SAP and MS Dynamics |

|

Talend |

Data Quality |

Data integration |

Deduplication, validation and standardization using machine learning; templates and reusable elements to aid in data cleansing |

|

TIBCO |

Clarity |

High volume data analysis and cleansing |

Tools for profiling, validating, standardizing, transforming, deduplicating, cleansing and visualizing for all major data sources and file types |

|

Validity |

DemandTools |

Salesforce data |

Handles multi-table mass manipulations and standardizes Salesforce objects and data through deduplication and other capabilities |

Datamation is the leading industry resource for B2B data professionals and technology buyers. Datamation's focus is on providing insight into the latest trends and innovation in AI, data security, big data, and more, along with in-depth product recommendations and comparisons. More than 1.7M users gain insight and guidance from Datamation every year.

Advertise with TechnologyAdvice on Datamation and our other data and technology-focused platforms.

Advertise with Us

Property of TechnologyAdvice.

© 2025 TechnologyAdvice. All Rights Reserved

Advertiser Disclosure: Some of the products that appear on this

site are from companies from which TechnologyAdvice receives

compensation. This compensation may impact how and where products

appear on this site including, for example, the order in which

they appear. TechnologyAdvice does not include all companies

or all types of products available in the marketplace.