The challenge of Big Data is a daunting one. We all know that data is exploding, but just how much data is out there? No one is certain, but former Google CEO Eric Schmidt has argued that we now create an entire human history’s worth of data every two days. “There was 5 exabytes of information created between the dawn of civilization through 2003,” Schmidt said a couple of years ago, “but that much information is now created every two days, and the pace is increasing.”

Those numbers may be exaggerated. RJMetrics CEO Robert J. Moore said in a TEDx talk recently that “23 exabytes of information was recorded and replicated in 2002. We now record and transfer that much information every seven days.”

Gartner believes that enterprise data will grow 650 percent in the next five years, while IDC argues that the world’s information now doubles about every year and a half. IDC says that in 2011 we created 1.8 zettabytes (or 1.8 trillion GBs) of information, which is enough data to fill 57.5 billion 32GB Apple iPads, enough iPads to build a Great iPad Wall of China twice as tall as the original.

The pace of data creation will surely increase, especially as machine-to-machine communications gets cheaper and more common. Think about how much data all of those sensor networks, burglar alarms and vehicle telematics systems will create.

According to IBM, every single day we create 2.5 quintillion bytes of data. IBM argues that the exponential growth of data means that 90 percent of the data that exists in the world today has been created in the last two years. “This data comes from everywhere: sensors used to gather climate information, posts to social media sites, digital pictures and videos, e-commerce transaction records, and cell phone GPS coordinates, to name a few.”

Of course, it’s important to remember that in early human history, anything as ephemeral as a tweet just would not have been recorded, so these comparisons can only be taken so far.

To put the data explosion in context, consider this. Every minute of every day we create

Source: Domo

Another challenge facing Big Data analysts is the fact that data is stored all over the place, in different systems. Breaking down data siloes is a major challenge. Another is creating Big Data platforms that can pull in unstructured data as easily as structured data.

When you get into the Big Data weeds, though, more arcane challenges emerge. For instance, traditional databases were not designed to take advantage of multicore processors. Thus, they are much slower at processing data than they could be, which has led to the concept of “Fast Data,” with startups such as ParStream attempting to overcome various legacy issues associated with databases.

Source: Finding the Small in Big Data

See the full video: Datamation’s Tech Videos

Whatever the exact number, we have a lot of data to contend with. Accumulating data is one thing. Doing something with it is another. You wouldn’t refer to a hoarder who accumulates old newspapers, empty tuna fish cans and live kittens as a “discerning collector,” after all. You wouldn’t visit a hoarder’s house to learn about history, the way you conceivably could from, say, an antiques collector. The signal-to-noise ratio is just too low.

With data, though, the world is full of hoarders. Digital storage is so cheap that people store everything—or, more accurately, don’t bother to delete anything. The same is true online, where online storage vendors now routinely give away GBs of data storage before charging a thin dime.

Today, businesses are struggling to contend with this out-of-control data sprawl—because if they don’t, they won’t stay competitive.

According to IBM, exponential data growth is leaving most organizations with serious blind spots. IBM found that one in three business leaders admit to frequently making decisions with no data to back them up. Their decisions are either based on information they don’t have or don’t really trust. Even more surprising, one in two business leaders admit that they don’t have working access to the information they need to effectively do their jobs.

Most business leaders and knowledge workers know that relevant data is out there, but they don’t know where. Even if they have a rough idea, they’re not sure how to extract it in any meaningful way. Finally, once they manage to find relevant data, they often aren’t sure how current or accurate it is.

This is where Big Data analytics comes in. What we’re after isn’t just raw data. We want the knowledge that comes from analyzing that data.

As technology to break down data siloes and analyze data improves, business can be transformed in all sorts of ways. The advances in analyzing Big Data allow researchers to decode human DNA in minutes, which makes businesses like 23andme feasible.

Researchers are able to predict where terrorist plan to attack, which gene is mostly likely to be responsible for certain diseases and, of course, which ads you are most likely to respond to on Facebook.

In fact, a recent study published in PNAS found that the things you “like” on Facebook reveal all sorts of probable traits about you, such as your intelligence, your gender, your sexual preference, your political leanings and more.

Some of these insights aren’t terribly surprising, such as the fact that someone who “likes” Small Business Saturdays is probably older than the typical Facebook user, but others are real head-scratchers, such as the fact that liking curly fries correlates with high intelligence. (Of course, correlation does not equal causation, and this could be random statistical noise, but Big Data Analytics will help you figure that out.)

The businesses cases for leveraging Big Data are more compelling than your addiction to curly fries. For instance, Netflix mined its subscriber data to put the essential ingredients together for its recent hit House of Cards, and subscriber data also prompted them to bring Arrested Development back from the dead.

Another example comes from one of the biggest mobile carriers in the world. France’s Orange launched its Data for Development project by releasing subscriber data for customers in the Ivory Coast. The 2.5 billion records, which were made anonymous, included details on calls and text messages exchanged between 5 million users.

A number of researchers accessed this dataset and sent Orange proposals about how this data could serve as the foundation for development projects. Proposed projects included one that showed how to improve public safety by tracking cell phone data to map where people went after emergencies; another showed how to use cellular data for disease containment, which Twitter actually already helped do during a cholera outbreak in Haiti.

Finally, the NSA’s Prism program relies on Big Data analytics as its justification for even existing. The program vacuums in metadata from cell phone calls, email exchanges, IM chats, social media and who knows what else?

As government officials defend the program, they often fall back on Big Data analysis as the key defense. If someone is a suspected terrorist, then that person’s phone records could unearth other terrorists or even help Homeland Security officials pinpoint the likely target of an upcoming attack.

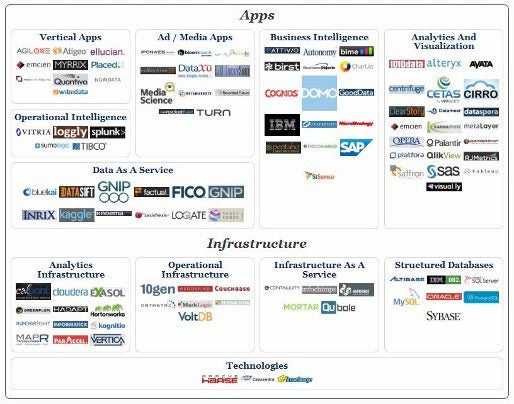

The larger Big Data market is a confusing one, comprised of everything from the underlying databases to storage to infrastructure platforms on up to the application layer, where we try to make sense of the data.

Source: BigDataLanscape.com

Today, the Big Data analytics market is still in its infancy. Big incumbents, such as Software AG, Oracle, IBM, Microsoft, SAP, EMC, and HP, compete with scrappy startups like Datameer, Alpine Data Labs, SiSense and Cloudmeter.

While the incumbents have collectively spent billions acquiring software firms in the data management and analytics space (Apema, Jacada, More IT Resources, Vertica and Vivisimo, to name only a few), the startups are sitting on impressive stacks of VC funding.

To further complicate things, companies that are a bit too old to be labeled startups have a foothold too. These include Pentaho, Splunk and Jaspersoft.

Finally, many Big Data analytics startups are targeting specific niches, such as social marketing (DataSift), programmatic advertising buying (Rocket Fuel), application performance (Cloudmeter) and even job searches and recruiting (Bright.com).

According to Wikibon, the total Big Data market reached $11.4 billion in 2012, ahead of Wikibon’s 2011 forecast. Wikibon projects that the Big Data market will reach $18.1 billion in 2013, an annual growth of 61 percent. This puts it on pace to exceed $47 billion by 2017, which translates to a 31 percent compound annual growth rate over the five year period 2012-2017.

Clearly, there is plenty of room for a number of vendors, as this market sector is still a land grab, but expect more consolidation in the near future.

This is where Big Data analytics comes in. What we’re after isn’t just raw data. We want the knowledge that comes from analyzing that data.

Open source. As Big Data gathers momentum, the focus is on open-source tools that help break down and analyze data. Hadoop and NoSQL databases are the winners here, while any proprietary technologies are frowned upon. This seems almost like a forced move in chess; after all, how can you justify creating a platform that unlocks data from various proprietary data siloes only to lock it back up again?

Market segmentation. Plenty of general-purpose Big Data analytics platforms have hit the market, but expect even more to emerge that focus on specific niches, such as drug discovery, CRM, app performance monitoring and hiring. Were the market more mature, it would make sense to build vertical-specific apps on top of general analytics platforms. That’s not how it’s happening, unless you consider the underlying database technology (Hadoop, NoSQL) as the general-purpose platform.

Expect more vertical-specific tools to emerge, which target specific analytic challenges common to business in such sectors as shipping, marketing, online shopping, social media sentiment analysis and more.

Going hand-in-hand with market segmentation is the fact that other tools are building smaller-scale analytic engines into their software suites. For instance, social media management tools, such as Hootsuite and Nimble, include data analysis as key features.

Predictive Analytics. Modeling, machine learning, statistical analysis and Big Data are often thrown together in the hopes of predicting future events and behaviors. Some things are pretty easy to predict, such as how bad weather can suppress voter turnout, while other predictions are fairly hard to pin down, such as the point when swing voters get alienated rather than influenced by push polls.

However, as data accumulates, we basically have the ability to run large-scale experiments on a continuous basis. Online retailers redesign shopping carts to figure out what design produces the most sales. Doctors are able to predict future disease risks based on things like diet, family history and the amount of exercise you get each day.

We’ve been making these sorts of predictions since the dawn of human history, of course. However, in the past, many predictions were based on gut feelings, incomplete data sets or common sense. (Common sense, after all, is what tells us that the world is flat).

Of course, just because you have plenty of data to base predictions on doesn’t mean they’ll be correct. Plenty of hedge fund managers and Wall Street traders analyzed market data in 2007 and 2008 and thought that the housing bubble would never burst. Historical data predicted that the bubble would burst, but many analysts wanted to believe things were different this time.

On the other hand, predictive analytics has been catching on in such areas as fraud detection (you know, those calls you get when you use your credit card out of state), risk management for insurance companies and customer retention.

Refocusing on the Human Decision-Making? As machine learning improves and becomes a table stakes feature in analytics suites, don’t be surprised if the human element initially gets downplayed, before coming back into vogue.

Business owners always try to limit “human error.” Talk to any security professional, and they’ll talk at length about how most security vulnerabilities are due to people making mistakes—relying on weak passwords, falling for phishing attacks or clicking on links they shouldn’t.

However, even as machine learning improves, the machines will only ask the questions we ask them to. There will be limits to how much we can learn just be relying on machines (although to hear some Big Data vendors talk, you could be excused for coming away worried about a Terminator-like future.)

You don’t have to look too hard at how Big Data is emerging to see just how important the human element is, however.

Two of the most famous Big Data prognosticators/pioneers are Billy Beane and Nate Silver. Beane popularized the idea of correlating various statistics with under-valued player traits in order to field an A’s baseball team on the cheap that could compete with deep-pocketed teams like the Yankees.

Meanwhile, Nate Silver’s effect was so strong that people who didn’t want to believe his predictions created all sorts of analysis-free zones, such as Unskewed Polls (which, ironically, were ridiculously skewed). Many think of Silver as a polling expert, but Silver is also a master at Big Data analysis.

In each case, what mattered most was not the machinery that gathered in the data and formed the initial analysis, but the human on top analyzing what this all means. People can look at polling data and pretty much treat them as Rorscharch tests. Silver, on the other hand, pours over reams of data, looks at how various polls have performed historically, factors in things that could influence the margin of error (such as the fact that younger voters are often under-counted since they don’t have landline phones) and emerges with incredibly accurate predictions.

Similarly, every baseball GM now values on-base percentage and other advanced stats, but few are able to compete as consistently on as little money as Beane’s A’s teams can. There’s more to finding under-valued players than crunching numbers. You also need to know how to push the right buttons in order to negotiate trades with other GMs, and you need to find players who will fit into your system.

As Big Data analytics becomes mainstream, it will be like many earlier technologies. Big Data analytics will be just another tool. What you do with it, though, will be what matters.

FEATURE | By Rob Enderle,

December 04, 2020

ARTIFICIAL INTELLIGENCE | By Guest Author,

November 18, 2020

FEATURE | By Guest Author,

November 10, 2020

FEATURE | By Samuel Greengard,

November 05, 2020

ARTIFICIAL INTELLIGENCE | By Guest Author,

November 02, 2020

ARTIFICIAL INTELLIGENCE | By Rob Enderle,

October 29, 2020

ARTIFICIAL INTELLIGENCE | By Rob Enderle,

October 23, 2020

FEATURE | By Rob Enderle,

October 16, 2020

FEATURE | By Cynthia Harvey,

October 07, 2020

ARTIFICIAL INTELLIGENCE | By Guest Author,

October 05, 2020

FEATURE | By Guest Author,

September 25, 2020

FEATURE | By Rob Enderle,

September 25, 2020

FEATURE | By Cynthia Harvey,

September 22, 2020

ARTIFICIAL INTELLIGENCE | By Rob Enderle,

September 18, 2020

ARTIFICIAL INTELLIGENCE | By James Maguire,

September 14, 2020

ARTIFICIAL INTELLIGENCE | By James Maguire,

September 13, 2020

FEATURE | By Rob Enderle,

September 11, 2020

FEATURE | By James Maguire,

September 09, 2020

FEATURE | By Rob Enderle,

September 05, 2020

ARTIFICIAL INTELLIGENCE | By Rob Enderle,

August 14, 2020

Datamation is the leading industry resource for B2B data professionals and technology buyers. Datamation's focus is on providing insight into the latest trends and innovation in AI, data security, big data, and more, along with in-depth product recommendations and comparisons. More than 1.7M users gain insight and guidance from Datamation every year.

Advertise with TechnologyAdvice on Datamation and our other data and technology-focused platforms.

Advertise with Us

Property of TechnologyAdvice.

© 2025 TechnologyAdvice. All Rights Reserved

Advertiser Disclosure: Some of the products that appear on this

site are from companies from which TechnologyAdvice receives

compensation. This compensation may impact how and where products

appear on this site including, for example, the order in which

they appear. TechnologyAdvice does not include all companies

or all types of products available in the marketplace.