Migrating to a cloud computing platform means your responsibility for data security goes up considerably. Data with various levels of sensitivity is moving out of the confines of your firewall. You no longer have control – your data could reside anywhere in the world, depending on which cloud company you use.

Cloud Storage and Backup Benefits

Protecting your company’s data is critical. Cloud storage with automated backup is scalable, flexible and provides peace of mind. Cobalt Iron’s enterprise-grade backup and recovery solution is known for its hands-free automation and reliability, at a lower cost. Cloud backup that just works.

SCHEDULE FREE CONSULT/DEMO

Moving to the public cloud or using a hybrid cloud means the potential for cloud security issues is everywhere along the chain. It can happen as the data is prepped for migration, during migration, or potentially within the cloud after the data arrives. And you need to be prepared to address this every step of the way.

Data security has been incumbent on the cloud service providers, and they have risen to the occasion. No matter which platform you select in the debate between AWS vs. Azure vs. Google, all sport various compliances to standards like HIPAA, ISO, PCI DSS, and SOC.

However, just because the providers offer compliance doesn’t give customers the right to abdicate their responsibilities. They have some measure of responsibility as well, which creates a significant cloud computing challenge. So here are eight critical concepts for data security in the cloud.

Privacy Protection

Your data should be protected from unauthorized access regardless of your cloud decisions, which includes data encryption and controlling who sees and can access what. There may also situations where you want to make data available to certain personnel under certain circumstances. For example, developers need live data for testing apps but they don’t necessarily need to see the data, so you would use a redaction solution. Oracle, for example, has a Data Redact tool for its databases.

The first step is something you should have done already: identify the sensitive data types and define them. Discover where the sensitive data resides, classify and define the data types, and create policies based on where the data is and which data types can go into the cloud and which cannot. Too many early adopters of the cloud rushed to move all their data there, only to realize it needed to be kept on-premises in a private cloud.

There are automated tools to help discover and identify an organization’s sensitive data and where it resides. Amazon Web Services has Macie while Microsoft Azure has Azure Information Protection (AIP) to classify data by applying labels. Third party tools include Tableau, Fivetran, Logikcull, and Looker.

Preserve Data Integrity

Data integrity can be defined as protecting data from unauthorized modification or deletion. This is easy in a single database, because there is only one way in or out of the database, which you can control. But in the cloud, especially a multicloud environment, it gets tricky.

Because of the large number of data sources and means to access, authorization becomes crucial in assuring that only authorized entities can interact with data. This means stricter means of access, like two-factor authorization, and logging to see who accessed what. Another potential means of security is a trusted platform module (TPM) for remote data checks.

Data Availability

Downtime is a fact of life and all you can do is minimize the impact. That’s of considerable importance with cloud storage providers because your data is on someone else’s servers. This is where the service-level agreement (SLA) is vital and paying a close eye to details really matters.

For example, Microsoft offers 99.9% availability for major Azure storage options but AWS offers 99.99% availability for stored objects. This difference is not trivial. Also, make sure your SLA allows you to specify where the data is stored. Some providers, like AWS, allow you to dictate in what region data is stored. This can be important for issues of compliance and response time/latency.

Every cloud storage service has a particular strength: Amazon’s Glacier is ideal for mass cold storage of rarely accessed data, Microsoft’s Azure blob storage is ideal for most unstructured data, while Google Cloud’s SQL is tuned for MySQL databases.

Data Privacy

A huge raft of privacy laws, national and international, have forced more than a few companies to say no to the cloud because they can’t make heads or tails of the law or it’s too burdensome. And it’s not hard to see why.

Many providers may store data on servers not physically located in a region as the data owner and the laws may be different. This is a problem for firms under strict data residency laws. Not to mention that the cloud service provider will likely absolve themselves of any responsibility in the SLA. That leaves the customers with full liability in the event of a breach.

As said above, there are national and international data residency laws that are your responsibility to know. In the U.S. that includes the Health Information Portability and Accountability Act (HIPAA), The Payment Card Industry Data Security Standards (PCI DSS), the International Traffic in Arms Regulations (ITAR) and the Health Information Technology for Economic and Clinical Health Act (HITECH).

In Europe you have the very burdensome General Data Protection Regulation (GDPR) with its wide ranging rules and stiff penalties, plus many European Union (EU) countries now that dictate that sensitive or private information may not leave the physical boundaries of the country or region from which they originate. There are also the United Kingdom Data Protection Law, the Swiss Federal Act on Data Protection, the Russian Data Privacy Law and the Canadian Personal Information Protection and Electronic Documents Act (PIPEDA).

All of these protect the interest of the data owner, so it is in your best interest to know them and know how well your provider complies with them.

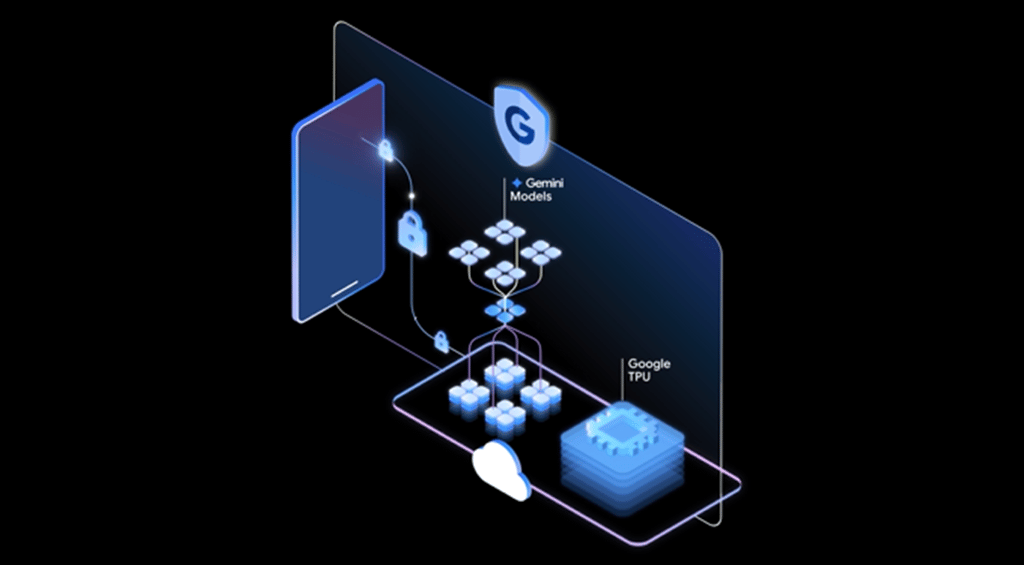

Encryption

Encryption is the means for which data privacy is protected and insured, and encryption technologies are fairly mature. Encryption is done via key-based algorithms and the keys are stored by the cloud provider, although some business-related apps, like Salesforce and Dynamix, use tokenization instead of keys. This involves substituting specific token fields for anonymous data tokens.

Virtually every cloud storage provider encrypts the data while it is in transfer. Most do it through browser interfaces, although there are some cloud storage providers like Mega and SpiderOak that use a dedicated client to perform the encryption. This should all be spelled out in the SLA.

Many cloud services offer key management solutions that allow you to control access because the encryption keys are in your hands. This may prove to be a better or at least more reassuring risk because you are in control over who has the keys. Again, this should be spelled out in the SLA.

Threats

If you are online you are under threat of attack, that is a fact of life. The old style of attacks, like DDoS attacks, SQL injection, and cross-site scripting, have faded into new fears. Cloud service providers have a variety of security tools and policies in place but problems still happen, usually originating in human error.

- Data breaches: This can happen any number of ways, from the usual means – a hacked account or a lost password/laptop – to means unique to the cloud. For example, it is possible for a user on one virtual machine to listen for the signal that an encryption key has arrived on another VM on the same host. It’s called the “side channel timing exposure,” and it means the victim’s security credentials in the hands of someone else.

- Data loss: While the chance of data loss is minimal short of someone logging in and erasing everything, it is possible. You can mitigate this by insuring your applications and data are distributed across several zones and you backup your data using off-site storage.

- Hijacked accounts: All it takes is one lost notebook for someone to get into your cloud provider. Secure, tough passwords and two-factor authentication can prevent this. It also helps to have policies that look for and alert to unusual activity, like copying mass amounts of data or deleting it.

- Cryptojacking: Cryptojacking is the act of surreptitiously taking over a computer to farm cryptocurrency, which is a very compute-intensive process. Cryptojacking spiked in 2017 and 2018 and the cloud was a popular target because there is more compute resources available. Monitoring for unusual compute activity is the key way to stop this.

Data Security and Staff

Most employee-related incidents are not malicious. According to the Ponemon Institute’s 2016 Cost of Insider Threats Study, 598 of the 874 insider related incidents in 2016 were caused by careless employees or contractors.

However, it also found 85 incidents due to imposters stealing credentials and 191 were by malicious employees and criminals. Bottom line: your greatest threat is inside your walls. Do you know your employees well enough?

Contractual Data Security

The SLA should include a description of the services to be provided and their expected levels of service and reliability, along with a definition of the metrics by which the services are measured, the obligations and responsibilities of each party, remedies or penalties for failure to meet those metrics, and rules for how to add or remove metrics.

Don’t just sign your SLA. Read it, have a lot of people read it, including in-house attorneys. Cloud service providers are not your friend and are not going to fall on their sword for liability. There are multiple checkmarks for a SLA.

- Specifics of services provided, such as uptime and response to failure.

- Definitions of measurement standards and methods, reporting processes, and a resolution process.

- An indemnification clause protecting the customer from third-party litigation resulting from a service level breach.

This last point is crucial because it means the service provider agrees to indemnify the customer company for any breaches, so the service provider is on the hook for any third-party litigation costs resulting from a breach. This gives the provider a major incentive to hold up their end of the security bargain.

SEE ALL

CLOUD ARTICLES