Data scraping is the process of extracting large amounts of data from publicly available web sources. The data is cleaned and prepared for processing and used by businesses for everything from lead generation and market research to consumer sentiment analysis and brand, product, and price monitoring. Because there are ethical and legal concerns around data scraping, it’s important to know what’s fair game and what’s not. This article explains the process, techniques, and use cases for data scraping, discusses the legal and ethical ramifications, and highlights some of the more common tools.

What is Data Scraping?

Data scraping—especially on a large scale—is a complex process involving multiple stages, tools, and considerations. At a high level, data scraping refers to the act of identifying a website or other source that contains desirable information and using software to pull the target information from the site in large volumes.

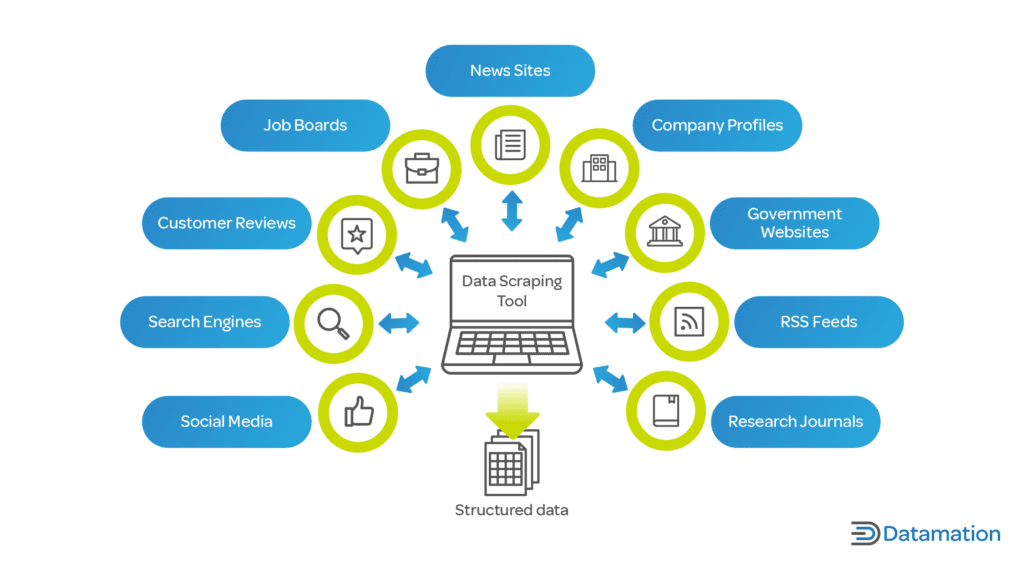

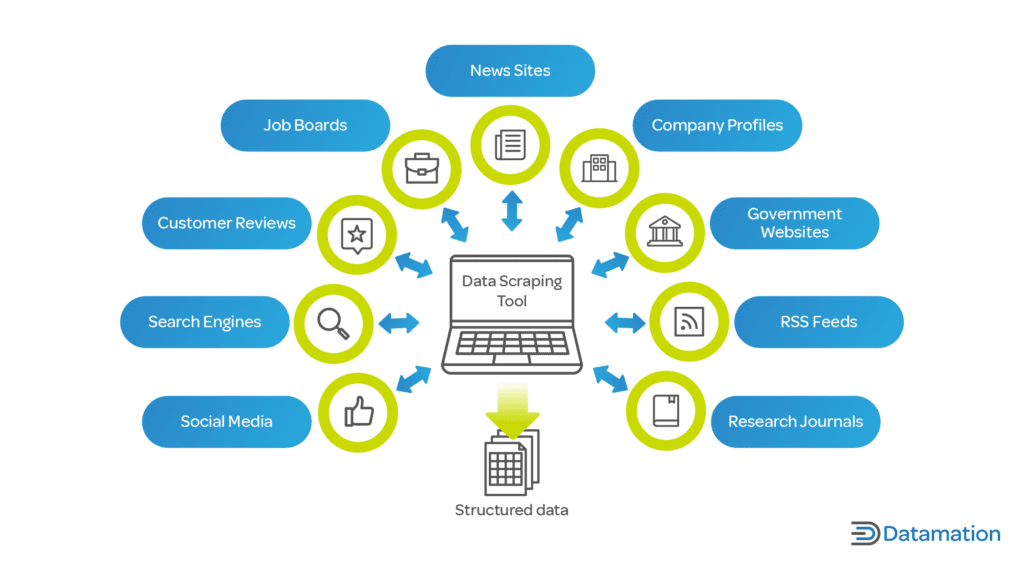

Sources for data can range from e-commerce sites and social media platforms to public databases and product review sites. Targeted data is usually text-based. Data scraping generally targets structured data from databases and similarly structured formats. Web scraping is a kind of data scraping that targets and extracts unstructured data from web pages.

As more businesses become reliant on data analytics for operations, business intelligence, and decision-making, the demand for both raw and processed data is on the rise. Gathering up-to-date and reliable data using traditional methods can be time-consuming and expensive—especially for smaller businesses with limited user bases. Using automated tools to “scrape” data from multiple sources, businesses can cast a wider net for the kind and amount of information they gather.

There are a number of approaches to data scraping and a wide variety of tools. Depending on the use case, there are also legal and ethical concerns to keep in mind around what data is gathered and how it is used.

How Data Scraping Works

Data scraping is done using code that searches the website or other source and retrieves the sought-after information. While it’s possible to write the code manually, numerous programming libraries—both free and proprietary—contain prewritten code in a number of programming languages that can be used to automate the task.

The programmer defines search criteria that tells the code what to look for. The code then communicates with the targeted data source by sending countless requests for data, interpreting the source’s response, and meticulously sifting through those responses to pick out the data that meets the criteria. Results can include databases, spreadsheets, or plain text files, for example, which can all be further cleaned for analysis.

Every website or data source is structured differently. While some web scrapers are capable of navigating a wide variety of layouts automatically, being unprepared could cause them to improperly scrape or miss some data, leading to incomplete or inaccurate sets. A web inspector tool can map and navigate all the parts and resources of a web page, including its HTML code, JavaScript elements, and web applications, better preparing the web scraper for what it will encounter.

Popular Data Scraping Techniques

Data can be scraped in more than one way—while no technique is outright better than another, each tends to work best in the specific scenario for which it was designed. Here’s a look at some of the most popular data scraping techniques.

API Access

Application programming interfaces, or APIs, are considered direct bridges between online websites or applications and outside communicators. Many websites with high-density data offer free or paid access to their own, integrated APIs, letting them provide data access while controlling how and how often site data is scraped.

If a website or application has an API, it’s best to use it over any alternative scraping method. API access ensures consistency and reduces the risk of violating the website’s terms of service (ToS). This is particularly important when scraping user-generated data on social media platforms, as some of it may be protected under personal information privacy laws and regulations.

DOM Parsing

Document object model (DOM) parsing provides a hierarchical representation of a web page’s data and structure. There’s a tool for this—DOM Parser is a JavaScript library capable of parsing XML and HTML documents by navigating and mapping a web page as a hierarchical, tree-like structure to locate the most important elements.

DOM parsing makes it possible to more interactively select which elements to scrape using class names, IDs, or nested relationships. It also ensures that the relations and dynamics between the various data points aren’t lost in the extraction process.

HTML Parsing

In HTML parsing, the data scraping tool reads the target web page’s source code, usually written in HTML, and extracts specific data elements that might not otherwise be accessible using another technique—for example, distinguishing data based on tags, classes, and attributes.

HTML parsing enables users to more easily navigate the complex structure of a website, granting access to as much data as possible and ensuring precise and reliable extraction.

Vertical Aggregation

Vertical aggregation is a specialized type of data scraping that works as a more comprehensive approach across various websites and platforms in the same niche. Instead of scraping a wide set of data once, vertical aggregation lets you focus data scraping efforts over a set period of time.

For example, vertical aggregation could be used to scrape job listings from different employment sites or the change in prices and discounts on e-commerce sites. The collected data is up-to-date and best used to support decision-making processes in niche-specific data fields.

Data Scraping Use Cases

Accurate, up-to-date data is a goldmine of knowledge and information for enterprises. Depending upon how it was processed and analyzed, it can be used for a wide range of purposes. Here are some of the most common business use cases for data scraping.

Brand, Product, and Price Monitoring

For businesses that want to keep watch over their brand and products online as well as their competitors’ brands and products, data scraping provides a high-volume means of monitoring everything from social media mentions to promotions and pricing information. Using data scraping to gather up-to-the-minute data allows them to adjust and adapt strategies in real time.

Consumer Sentiment Analysis

The success of products and services can hinge on consumer perceptions. By scraping reviews, comments, and discussions from online review sites and platforms, businesses can gauge the pulse of the consumer. Aggregating this data paints a clearer picture of overall sentiment—positive, neutral, or negative—to assist companies in refining their offerings, addressing concerns, and amplifying strengths. It acts as a feedback loop, helping brands maintain their reputation and cater better to their consumer base.

Lead Generation

Automating the extraction of data and insights from professional networks, directories, and industry-specific websites gives businesses a valuable way to find clients and customers online. This proactive approach facilitates outreach by giving sales and marketing teams a head start. Scraping massive amounts of data and running it through an analytical model enables businesses to connect with the right prospects more efficiently than manually searching potential leads.

Market Research

Having up-to-date and relevant data is paramount to successful marketing. Data scraping lets businesses collect vast amounts of data about competitors, market trends, and consumer preferences. When cleaned, processed, and analyzed for patterns and trends, data can provide insights that drive marketing campaigns and strategies by identifying gaps in the market and predicting upcoming trends.

Legal and Ethical Considerations of Data Scraping

Data scraping is a broad term that encompasses a lot of different techniques and use cases with varying intent. In the U.S., it’s generally legal to scrape publicly available data such as job postings, reviews, and social media posts, for example.

Scraping personal data may conflict with regional or jurisdictional regulations like the European Union’s General Data Protection Regulation (GDPR) and the California Consumer Privacy Act. The definition of personal information may vary depending on the policy. The GDPR, for example, forbids the scraping of all personal data, while the CCPA only prohibits non-publicly available data—anything made available by the government is not covered.

As a general rule, it’s a good idea to be cautious when scraping personal data, intellectual property, or confidential information. In addition, some websites explicitly state that they don’t allow data scraping.

There are also ethical concerns about the effects of data scraping. For example, sending too many automated requests to a particular website using a data scraping tool could slow or crash the site. It could also be misconstrued or flagged as a distributed denial of service (DDOS) attack, an intentional and malicious effort to halt or disrupt traffic to a site.

Data Scraping Mitigation

Websites can employ a variety of measures to protect themselves from unauthorized data scraping outside of their dedicated API. Some of the most common include the following:

- Rate limiting—limiting certain types of network traffic to reduce strain and prevent bot activity.

- CAPTCHAs—requiring users to complete an automated test to “prove” they are a human visitor.

- Robots.txt—a text file containing instructions defining what content bots and crawlers can use and what’s off limits.

- Intelligent traffic monitoring—using automated tools to monitor traffic for tell-tale bot patterns and behaviors.

- User-agent analysis—monitoring any software that tries to retrieve content from a website and preventing suspected scraping tools.

- Required authentication—not allowing access to any unauthorized user or software.

- Dynamic website content—web content that changes based on user behavior that can recognize and block scraping tools.

Data scraping tools consist of code written in a range of programming languages. Python is the most popular language for this purpose, because of its ease of use, dynamic type language and accessible syntax, and community support. It also offers a number of data scraping libraries. Other popular languages for data scraping include JavaScript and R. Here are a few of the most commonly used data scraping tools.

Beautiful Soup

Beautiful Soup is a Python library of prepackaged open-source code that parses HTML and XML documents to extract information. It’s been around since 2004 and provides a few simple methods as well as automatic encoding options.

Scrapy

Scrapy is another free, open-source Python framework for performing complex web scraping and crawling tasks. It can be used to extract structured data for a wide range of uses, and can be used for either web scraping or API scraping.

Octoparse

Octoparse is a free, cloud-based web scraping tool. It provides a point-and-click interface for data extraction, allowing even non-programmers to efficiently scrape data from a wide range of sites, and uses an advanced machine learning algorithm to locate data.

Parsehub

Parsehub is a cloud-based app that provides an easy-to-use graphical user interface, making it possible for non-programmers to use it intuitively to find the data they want. There’s a free version with limits. The standard version is $149 per month, and the Professional version costs $499 per month.

Data Scraping vs. Data Crawling

Data scraping and data crawling both concern the extraction of information from websites. Data scraping focuses on extracting specific information from numerous web pages on various sites. Data crawling is a broader process, primarily used by search engines.

Web crawlers, also referred to as spiders, systematically scour the web to collect information about each website and web page rather than the information contained within the pages themselves. This information is then indexed for search engine and archival purposes.

Bottom Line: What is Data Scraping?

Data analytics is increasingly critical for businesses looking for a competitive advantage, more streamlined operations, better business intelligence, and data-driven decision-making. At the same time, we’re producing more data than ever before—from online shopping to social media, information about behaviors, interests, and preferences is widely available to anyone who knows where to look.

Data scraping is a way for enterprises to use automated tools to cast a wide net that gathers massive volumes of data that meets the specifications they define. It’s useful for a wide range or purposes, and prebuilt code libraries serve as easy-to-use data scraping tools that make the process feasible for even non-technical users.

Because data scraping can involve personal information, there are legal and ethical concerns. Any enterprise data scraping effort should take regional and jurisdictional regulations into account, and should be reviewed on an ongoing basis to keep pace with changing policies.

Learn more about the pros and cons of big data, of which data scraping is just one component.