Big data best practices enable organizations to use data more precisely, securely, and ethically, allowing them to make better decisions, enhance operational efficiency, and maintain a competitive edge. Enterprises that use big data best practices position themselves for long-term success in data management, scalable infrastructure, and agile development approaches. The data landscape is always evolving. To help you keep pace with those changes, here are our recommendations for big data best practices for 2024 based on emerging trends, technologies, and common enterprise applications.

Implement Data Quality Management Programs

Data quality management is the process of ensuring that data is accurate, complete, and reliable throughout its lifespan. This comprises methods for data cleansing, validation, and standardization to ensure high-quality data.

Why It Matters

Data quality management protects against errors and inconsistencies within datasets, laying the groundwork for sound decision-making. The increasing emphasis on real-time streaming processing highlights the significance of high-quality data for quick and accurate analytics. Poor data quality might jeopardize the validity of real-time insights, leading to incorrect judgments.

AI-augmented development—engineering that integrates machine learning (ML) and AI technologies into the software development process—relies significantly on reliable data to efficiently train ML models, making data quality management critical to the success of AI endeavors.

Build More Scalable Infrastructures

Scalable infrastructure entails creating systems that can efficiently manage expanding data quantities and user demands. This involves using cloud resources, deploying distributed computing, and optimizing storage solutions.

Why It Matters

Scalable infrastructure allows for better management of the growing data landscape. In 2024, the notion of continuous threat exposure management (CTEM) highlights the importance of scalability in dealing with increasing security risks.

A scalable architecture guarantees that the system can adapt to changing security requirements and effectively handle any risks. With a sustainable technology platform, engineering is consistent with best practices for scalability, stressing the infrastructure’s long-term durability to enable sustainable and efficient data processing.

Employ Agile Development Methodologies

Agile development is a flexible software development approach that focuses on teamwork, consumer input, and incremental updates. It uses frameworks like Scrum and Kanban for efficient project management and to help create a culture of continuous improvement. Agile teams work closely, resulting in better communication and faster adaptation to changing business requirements.

Why It Matters

Agile development is critical for quickly responding to changing business requirements and technical improvements. Agile’s iterative nature enables teams to integrate input throughout the development process, ensuring that the end result meets user expectations.

In 2024, AI-augmented development will require agile techniques to easily incorporate and adapt AI technology. Intelligent apps, another trend, also benefit from agile development since it allows enterprises to quickly implement intelligent features and functions into their apps, improving user experiences and remaining competitive in a changing market.

Safeguard Data With Robust Security Measures

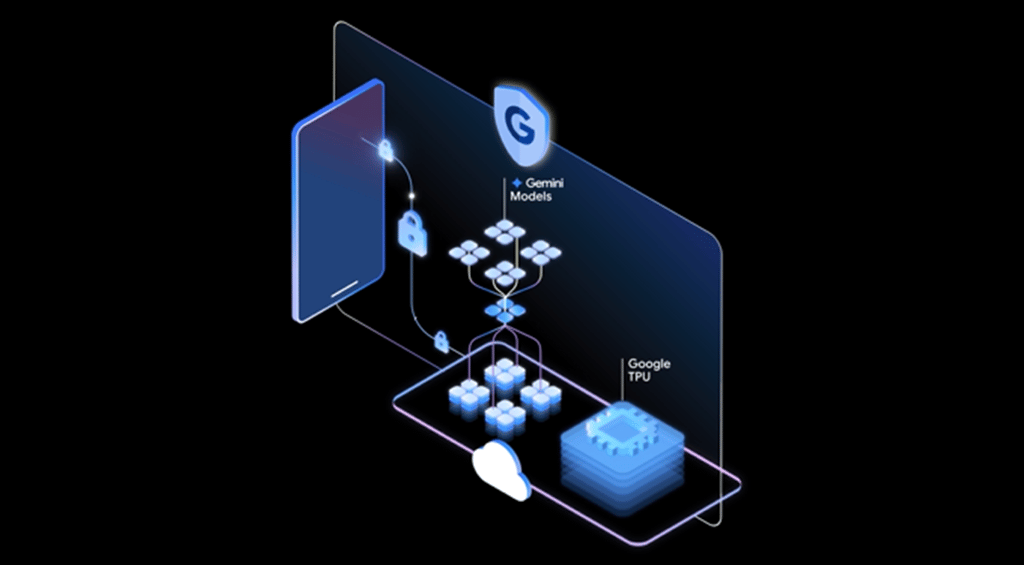

Security measures are a complete collection of techniques used to protect data against unauthorized access, breaches, and harmful activity. These safeguards include strong encryption, strict access rules, ongoing monitoring, and proactive threat detection. Organizations use a multi-layered strategy to build a secure environment that protects the confidentiality, integrity, and availability of their data.

Why It Matters

Implementing robust security measures protects data systems from cyber threats and sophisticated attacks. Encryption and access controls are essential layers, transforming sensitive information into unreadable code to prevent unauthorized access. Continuous monitoring, real-time surveillance, and AI Trust, Risk, and Security Management (AI TRiSM) are key aspects of these measures.

Industry cloud platforms also require robust security measures to safeguard data stored in the cloud. Regular monitoring and auditing of access logs combined with advanced threat intelligence contributes to a resilient security posture.

Use Data Ethically

Ethical data usage entails creating and following ethical norms for collecting, storing, and using data in order to ensure accountable and transparent activities. This involves gaining informed consent, anonymizing sensitive information, and complying with privacy requirements.

Why It Matters

Ethical data usage is critical for establishing confidence among consumers and stakeholders. It protects people’s privacy and rights, reduces the danger of data misuse, and assures compliance with legal and regulatory frameworks like the European Union’s General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA).

The emphasis on AI TRiSM highlights the need for ethical data practices in AI systems and is one of the most recent trends for 2024. Responsible data utilization reduces possible biases in AI systems, resulting in more fair and equal outcomes.

Monitor and Optimize Continuously

Continuous monitoring of system performance, identifying bottlenecks, and implementing adjustments to increase the efficiency of big data activities can help guarantee that data processing systems run smoothly, adapt to changing needs, and remain at the forefront of efficiency by using advanced monitoring tools, performance analytics, and automatic optimization techniques.

Why It Matters

Continuous monitoring and optimization help maintain the health and effectiveness of big data infrastructures, providing real-time insights, enabling early detection of potential issues and proactive decision-making. This practice helps identify bottlenecks and address them promptly.

In 2024, sustainable technology platform engineering aligns with continuous monitoring and optimization, ensuring long-term efficiency and resource utilization. Implementing monitoring tools and automated optimization procedures contributes to agility in big data operations. As data volumes and processing demands increase, continuous monitoring and optimization ensures organizations can navigate the evolving landscape of big data.

Provide Workforce Skill Development

Skill development is a strategy that includes significant investments in training and development programs designed to improve the abilities of data professionals. This proactive strategy guarantees that employees inside the organization have the necessary skills to handle the complicated and ever-changing world of big data. By remaining current on the newest technologies and processes, they can better help the business extract important insights, make educated decisions, and innovate in the field of data analytics.

Why It Matters

Skill development provides data workers with technical expertise and fosters a culture of continual learning. As AI-augmented development and intelligent applications become more popular, a competent workforce is required to integrate AI into processes and navigate industry cloud platforms.

Investing in skill development creates a personnel pool capable of responding to growing issues and seizing new opportunities, boosting creativity and adaptation. A highly qualified staff provides a competitive edge, allowing organizations to fully leverage big data and sustain market leadership.

Bottom Line: Refine Big Data through Best Practices

Prioritizing continual refinement of big data processes through strategic application of best practices is a practical need for enterprises. This strategy strengthens data analytics efforts and provides data analysts with the tools and procedures necessary to extract significant insights. Organizations may traverse the ever-changing environment of big data with resilience and sustained success by being nimble, adopting ethical data usage, implementing security measures, investing in workforce skill development, and maintaining scalable infrastructure.

Learn more about data management for a better understanding of how big data best practices fit into an enterprise’s overarching data efforts.