The way enterprises approach big data is rapidly changing.

Just a few short years ago, big data was a hot buzzword, and most organizations were only experimenting with Hadoop and related technologies. Today, big data, particularly big data analytics, has evolved to become a critical part of most business’s strategies, and organizations are facing intense pressure to keep up with rapid advances in the field.

The NewVantage Partners Big Data Executive Survey 2018 found that big data projects — and the benefits derived from those projects — have become nearly universal. Among respondents, 97.2 percent of executives said their companies are working on big data or artificial intelligence (AI) initiatives, and 98.6 percent said their firms are trying to create a data-driven culture, up from 85.5 percent in 2017. A strong majority (73 percent) also said that they had already obtained measurable value as a result of their big data initiatives.

A separate 2018 Big Data Maturity Survey conducted by vendor AtScale found that 66 percent of organizations consider big data to be strategic or game-changing, compared to only 17 percent who still consider the technology experimental. In addition, 95 percent of respondents planned to do as much or more with big data in the next three months.

But what exactly will they be doing with their big data?

A number of different trends are impacting big data initiatives, but four overarching themes are emerging as key factors influencing big data in 2018: cloud computing, machine learning, data governance and the need for speed.

1. Cloud Computing

Analysts believe big data is moving to the cloud in a big way. According to Forrester’s Brian Hopkins, “Global spending on big data solutions via cloud subscriptions will grow almost 7.5 times faster than on-premise subscriptions. Furthermore, public cloud was the number one technology priority for big data according to our 2016 and 2017 surveys of data analytics professionals.” He said the cost advantages and innovation available through public cloud services will prove irresistible to most enterprises.

And surveys appear to support those conclusions.

In the AtScale report, 59 percent of respondents said that they have already deployed big data in the cloud, and a whopping 77 percent of respondents projected that some or all of their big data deployment will be in the cloud.

The Teradata State of Analytics in the Cloud report found even higher demand for cloud-based big data analytics. Eighty-three percent of those surveyed said the crowd is the best place to run analytics, and 69 percent said they want to run all their analytics in the cloud by 2023.

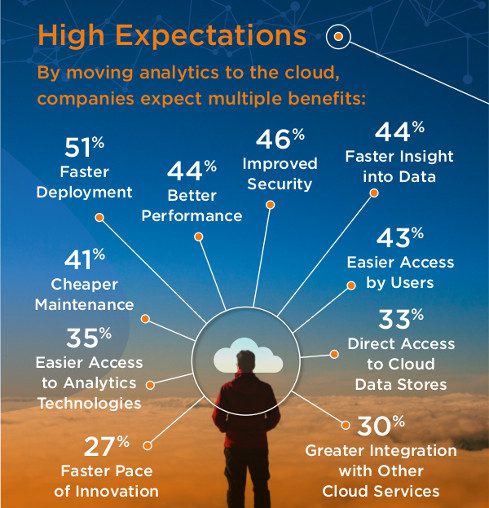

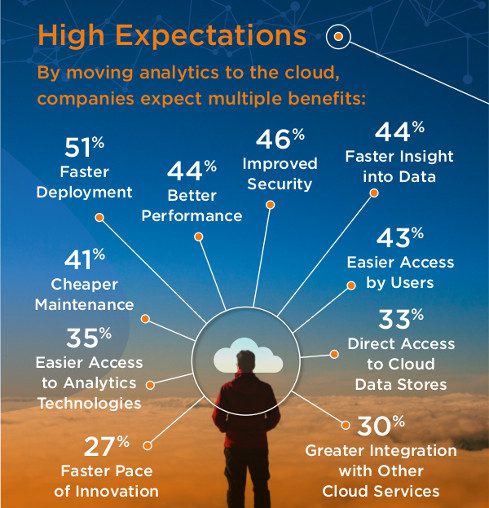

Why are they so eager to move to the cloud? The expected benefits of cloud analytics included faster deployment (51 percent), improved security (46 percent), better performance (44 percent), faster insight into data (44 percent), easier access by users (43 percent), and cheaper maintenance (41 percent).

Image Source: Teradata

Organizations continue to migrate their data storage to public cloud providers, and when data already resides in the cloud, it’s faster, easier and less expensive to do big data analytics in the cloud as well.

In addition, many of the cloud providers offer AI and machine learning tools that make the cloud even more attractive.

2. Machine Learning and Artificial Intelligence

Machine learning, the branch of AI concerned with teaching computers to learn without being explicitly programmed, has become so intrinsically connected with big data analytics that the two terms are sometimes conflated together. In fact, the cover of the annual NewVantage big data survey was redesigned this year to show that it included both big data and AI. The report authors wrote, “Big Data and AI projects have become virtually indistinguishable, particularly given that machine learning is one of the most popular techniques for dealing with large volumes of fast-moving data.”

When that survey asked executives to pick which big data technology would have the greatest disruptive impact, the top vote-getter, selected by 71.8 percent of respondents, was AI. That was a dramatic increase from 2017, when just 44.3 percent of respondents said the same thing. And it’s particularly noteworthy that AI beat out cloud computing (12.7 percent) and blockchain (7.0 percent) to take that spot on the list.

John-David Lovelock, research vice president at Gartner, has agreed with those executives. “AI promises to be the most disruptive class of technologies during the next 10 years due to advances in computational power, volume, velocity and variety of data, as well as advances in deep neural networks (DNNs),” he stated.

His firm recently predicted, “Global business value derived from artificial intelligence (AI) is projected to total $1.2 trillion in 2018, an increase of 70 percent from 2017.” Looking ahead, the firm added, “AI-derived business value is forecast to reach $3.9 trillion in 2022.”

Given that potential business value, it’s no surprise that enterprises plan to invest heavily on machine learning and related technologies. According to IDC, “Worldwide spending on cognitive and artificial intelligence (AI) systems will reach $19.1 billion in 2018, an increase of 54.2 percent over the amount spent in 2017.”

3. Data Governance

But while the potential benefits available through cloud computing and machine learning are driving enterprises to invest in these big data technologies, organizations still face significant hurdles related to big data.

One of the biggest is how to ensure the accuracy, availability, security and compliance of all that data.

When the AtScale survey asked respondents to name their biggest challenge related to big data, governance was the number two vote-getter, right behind skill set, which has been the number one challenge cited in the survey every year. Back in 2016, governance was at the bottom of the list of challenges, so its rise to second place is particularly dramatic. And organizations are now more concerned with data governance than with performance, security or data management.

Part of the reason for the renewed concern may lie in the recent Facebook and Cambridge Analytica scandal. The story demonstrates all too clearly the potential public relations nightmare that can occur from losing track of where your data is going and failing to properly protect users’ or customers’ privacy.

Another big force for change is the European Union’s General Data Protection Regulation (GDPR), which goes into effect this month. It requires all organizations with data belonging to EU citizens to meet certain requirements, such as breach notification, right to access, right to be forgotten, data portability, privacy by design and the appointment of a data protection officer.

The regulatory change is putting increased pressure on organizations to ensure that they know what data they have and where it resides and that they are properly securing that data. It’s a tall order, and it is requiring many enterprises to put on the brakes and rethink their big data strategies.

4. The Need for Speed

At the same time they are feeling the need to slow down to deal with data governance issues, many enterprises are also experiencing demand for ever-faster big data analytics.

In the NewVantage survey, 47.8 percent of executives said that their primary use of big data was for “near real-time, intra-day dashboards and operational reporting, or for real-time, interactive, or streaming customer-facing or mission-critical applications.” That’s a significant development because the traditional use for data analytics has been to perform batch reporting on a daily, weekly or monthly basis.

Similarly, a Syncsort survey found that 60.4 percent of respondents were interested in real-time analytics.

In order to meet that need for real-time or near real-time performance, organizations are increasingly turning to in-memory technology. Because processing data in memory (RAM) is much, much faster than accessing data stored on a hard drive or even a solid state drive, in-memory technology can lead to extraordinary speed improvements.

In fact, SAP claims that its proprietary HANA technology has helped some companies speed up their business processes by as much as 10,000 times. While most companies don’t experience that level of performance gains, SAP isn’t alone in making dramatic claims for in-memory technology. Apache Spark, an open source big data analytics engine that runs in memory, boasts that it can run workloads up to 100 times faster than the standard Hadoop engine.

Enterprises seem to be taking note of these performance improvements. Vendor Qubole has reported that among its customers Apache Spark usage in terms of compute hours grew 298 percent between 2017 and 2018. When you look at the number of commands run on Apache Spark, the growth is even more impressive: total commands run on Spark increased 439 percent between 2017 and 2018.

In some ways, this need for speed is driving the three other big data macro trends, as well. Organizations move big data to the cloud, in part, because they hope for performance gains. They invest in machine learning and AI, at least in part, because they hope to gain faster, better insights. And they are experiencing challenges related to data governance and compliance, at least in part, because they have been so fast to embrace big data technologies without first solving all their data quality, privacy, security and compliance issues.

In the near future, expect all four of these trends to continue and intensify as organizations look for new ways to use big data to disrupt their industries and gain competitive advantage.

SEE ALL

BIG DATA ARTICLES