Datamation content and product recommendations are editorially independent. We may make money when you click on links to our partners.

Learn More

High-performance computing (HPC) would be easier to understand if it were described by a single definition. Conceptually, most HPCs fall somewhere between workstations and supercomputers. However, while supercomputers are also HPCs, not all HPCs are supercomputers.

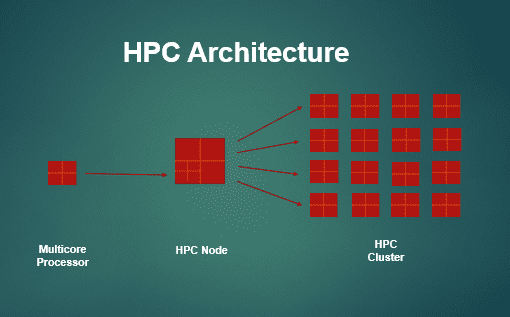

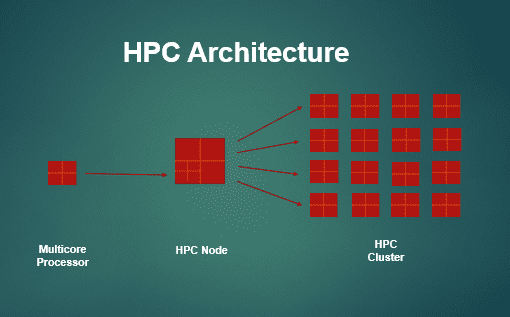

One thing that distinguishes HPCs is their architecture. Rather than having a monolithic, single-box design, HPCs use clusters of servers that work in parallel to perform highly-complex calculations.

While the definition is diverse, one thing is clear: Data centers across industries use HPCs to solve difficult, compute-intensive problems cost-effectively. HPCs are a key element in handling today’s demanding computer workloads, from Big Data to predictive analytics to machine learning and artificial intelligence.

HPC Architecture

HPC architecture is not limited to any set number of clusters. Instead, the number of clusters is defined by the processing power necessary to solve a particular problem.

What is an HPC Cluster?

An HPC cluster is a set of linked computers that use parallel processing to achieve higher computing power than could be achieved using just one of the computers in the cluster. The computers within a cluster are called “nodes.” A small cluster may use as few as four nodes, and a large cluster may have thousands of nodes. (A super computer has thousands of nodes, for example.) Regardless of how many nodes there are, multiple nodes use the power of parallel processing.

What is an HPC Processor?

The architecture of the nodes includes an HPC processor. The HPC processor may use multicore central processing units (CPUs) or general-purpose graphics processing units (GPGPUs). CPUs are the traditional “brain” of a computer. GPGPUs are a general purpose version of GPUs, which process graphics and images. The more cores the processor has, the more powerful it is. Multicore processors, like multiple clusters, enable parallel processing.

Multicore processors overcome some of the physical limitations of silicon. According to Moore’s Law, the number of transistors packed into an integrated circuit (in this case, a processor) doubles every four years. When Moore’s Law proved to be unsustainable, at least with the technology available in the early 2000’s, chip designers had to come up with another solution that could enable faster processing speeds. Their solution was multicore designs that split workloads up among several cores. In order to affect shared workloads across multiple cores, the cores must be able to communicate with each other.

Linux vs. Windows

HPCs, like other types of computers, need more than hardware to run. They need an operating system that enables users to utilize applications. HPCs use either a Linux or Windows operating system. Linux is a family of free and open-source software operating systems based on the Linux kernel. Windows is Microsoft’s commercial operating system. Linux tends to be more popular for high performance computing than Windows since Linux is a clone of the UNIX operating system, and the UNIX operating system is used by supercomputers.

HPC architecture connects a multicore processor with an HPC Node and an HPC Cluster to create an exceptionally robust computing platform.

Benefits of HPC

The main benefits of HPC are speed, cost, a flexible deployment model, fault tolerance, and total cost of ownership, although the actual benefits realized can vary from system to system.

- Speed. High performance is synonymous with fast calculations. The speed of an HPC depends on its configuration. That is, more clusters and cores enable faster enable parallel processing. Performance is also affected by the software that runs on the machine, including the operating system design and application design, and the complexity of the problem being solved.

- On-premise or cloud. HPC can sit behind a firewall in an enterprise or made available as a cloud computing service option. The advantage of the latter is elasticity, meaning compute resources can be scaled up or down as necessary. Some cloud providers also allow users to choose CPUs or GPUs so they can custom-tailor compute resources to suit the requirements of a particular project.

- Fault tolerance. If part of the system fails, the entire HPC system doesn’t fail. Given that HPC workloads are compute heavy, fault tolerance ensures that the computations continue uninterrupted.

- Total cost of ownership. Competitive pressure among manufacturers cause them to continuously improve the ROI of their designs; however, sticker price is only part of TCO. Total cost also includes power, cooling, and maintenance if the HPC is on-premise. Cloud vendors tout TCO as a benefit because customers don’t pay for the procurement, operation, or maintenance costs of HPC resources on an ongoing basis. They only pay for the amount of resources they use.

HPC Use Cases

Many industries use HPC for problem-solving, including life sciences, manufacturing, and oil and gas, and more use cases are appearing over time. Some examples include:

- Real-time securities trading

- Virtual technical support

- Disease prevention and treatment

- Oil and mineral deposit location discovery

Jobs in HPC

High-performance computing jobs vary in description, like many jobs related to technology. There are technical roles and non-technical roles, such as:

- HPC application or firmware tester

- HPC application developer

- HPC marketing manager/director/VP

- HPC UNIX Systems administrator

- Autonomous car engineer (who knows how to apply HPC and virtual analysis technologies)

HPC Applications

Although a supercomputer is a type of HPC, most references to HPCs refer to a configuration that is less powerful and less expensive than a supercomputer. The difference between the two has to do with the number of cores, clusters and cost. Essentially, if the HPC has more cores and clusters, it is more expensive. Most businesses can’t afford supercomputers, so depending on their requirements they choose HPCs, HPC cloud computing, or regular cloud computing.

HPC cloud computing is a cloud-based service option. Essentially, it’s a powerful cloud computing option. HPC cloud computing includes two components: compute and storage. Compute and storage are both needed for HPC because the outcome of the calculations need to be stored somewhere.

Given the sheer size of the problems HPC is used to solve, it makes more sense to store the output where the calculations were done (in the cloud) rather than worrying about compression or risking the possibility of losing data due to network-related communications failures or potential security vulnerabilities.

Another compelling aspect of HPC cloud computing is the flexibility of processors used. Specifically, it is possible to choose CPUS or GPUs depending on the nature of the job that needs to be computed. GPUs are particularly well-suited to simulations, imaging and modeling.

Note: The virtual nature of cloud computing and storage in the context of HPC should not be confused with hyperconverged infrastructure (HCI), which really applies to data centers. Hyperconverged infrastructure applies to the virtualization of computing, storage, and network resources. Because those resources are virtual instead of physical, they are commonly referred to as being part of a “software defined” architecture.

Some HPC software applications that take advantage of GPUs include:

- Computational fluid dynamics

- Weather and environmental modeling

General HPC applications include the above, plus:

HTPC is the application of HPC to technical problems (as opposed to business or scientific problems). Examples HPTC include:

- Computation al fluid dynamics

SEE ALL

DATA CENTER ARTICLES